Weaponized Innovation: The Predatory Rise of AI in Healthcare

Insurance giants are using algorithms to automate care denial at a scale that defies human accountability. Here is how we stop them.

As a neurodivergent mental health professional in the United States, I’ve been drowning in the disgust related to the American health care system—on both a personal and professional level—for at least two decades.

In this article, I’m writing about:

How health insurance companies scam you with “convenience”

How AI integration makes it easier for them to deny you the care you need

The parallels between big tech and healthcare companies

What you can do about it without waiting for government intervention

TLDR: Your health insurance company hates you, and AI is accelerating their ability to line their pockets, without accountability, at your expense.

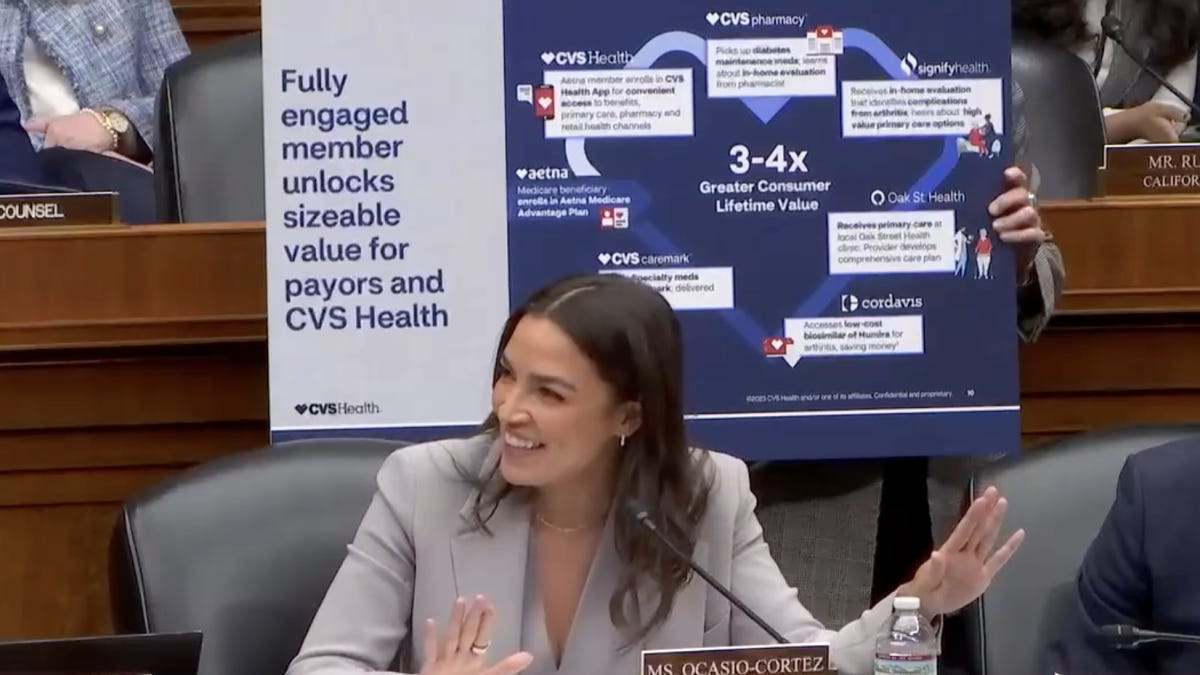

On January 22, 2026, the CEOs of UnitedHealth, CVS, Cigna, Elevance, and Humana testified before Congress about rising healthcare costs, claims denials, and the vertical eco system that allows companies to profit off themselves.

As I watched the bipartisan barrage of questioning, I realized just how much worse our already-broken healthcare system has become in the last few years, and how much of the AI deployed by the C-suites will actually make everything exponentially worse.

Let’s Be Clear: AI Can Be Helpful

Before we get caught up in the system, I want to put on the record that AI has the genuine potential to improve healthcare, if deployed ethically.

It has already been shown to be able to help providers catch cancer earlier and respond more effectively, spend less time on documentation and more time on patients, personalize treatment, and reduce hospital readmissions.

That’s not what I’m writing about today.

The healthcare system has been broken long before AI was deployed to decide if you should be able to use your benefits or not. It broke decades ago when we allowed massive consolidation, vertical integration, and for-profit insurance companies to dominate a market that should never have been a market in the first place.

Some of these companies have been under investigation by the Federal Trade Commission since 2022, yet little has been done to stop or change the system.

Big Tech But Make It Healthcare

In tech, a few giants quietly run the pipes of the internet; in healthcare, a handful of insurance and pharmacy giants are building the same kind of hidden infrastructure, using vertical integration to own more and more of the steps between your premium dollar and your care.

UnitedHealth Group is one of these vertically integrated companies under investigation. Along with insuring tens of millions of people, they run a big pharmacy benefits business (OptumRx), the own a bank (Optum Bank), and employ or affiliate with tens of thousands doctors through Optum.

CVS Health also owns much more than pharmacies these days, including:

Aetna, a major health insurer

Oak Street Health, a chain of primary care clinics

Signify Health, which sends clinicians into people’s homes

Cordavis, a CVS subsidiary that makes biosimilar versions of expensive drugs

CVS Caremark pharmacy benefit manager

Companies like this decide what care they cover, how much it costs, whether to cover your medication, how much that costs, and where you can fill it. All while making sure every decision funnels money back to them.

Adding AI to a Broken System

When you deploy AI into a system that’s already optimized for profit over care, you get weaponized automation.

UnitedHealthcare’s denial rate for post-acute care more than doubled between 2020 and 2022—from 10.9% to 22.7%—as they implemented algorithms to automate their review process. When patients actually appeal these denials to federal administrative law judges, about 90% are reversed.

Nine out of ten denials were wrong.

But they’re counting on you not having the energy, time, or knowledge to appeal.

Remember that bank UnitedHealth owns?

After their own cybersecurity breach shut down their payment processing system, thousands of doctors couldn’t get paid for months. So Optum Bank (owned by UnitedHealth) offered emergency loans to keep practices afloat.

Then they started demanding immediate repayment. Sometimes hundreds of thousands of dollars. Within five business days. If practices didn’t pay, Optum threatened to withhold their insurance claim payments until the balance was repaid.

They broke the payment system.

They loaned money to the people they broke.

They used their insurance leverage to force repayment.

It’s not just UnitedHealth Group.

ProPublica reviewed internal documents showing that Cigna doctors spent an average of 1.2 seconds reviewing each of the 300,000 denials it issued in two months. Former Cigna medical directors confirmed it on the record: “We literally click and submit. It takes all of 10 seconds to do 50 at a time.”

Over 80% of those denials got overturned on appeal.

But less than 1% of people actually appeal.

AI isn’t making better medical decisions, but it is making it easier to deny care at a scale that would be impossible with human review, while identifying exactly which patients are least likely to fight back.

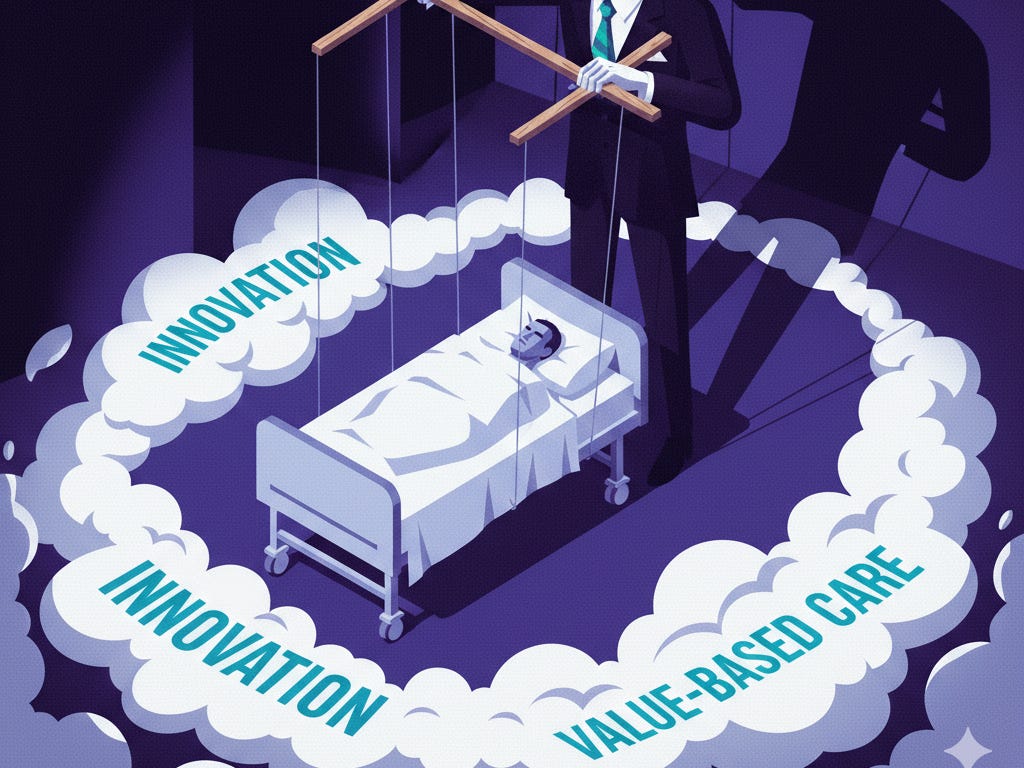

The Smokescreen

Here’s what makes this so insidious: the same language that describes beneficial AI provides perfect cover for predatory AI.

When insurance companies talk about “innovation” and “improving patient outcomes” and “value-based care,” they’re using words that sound like they mean something good for you.

Better treatment.

Personalized care.

Quality over quantity.

But “value-based care” doesn’t mean care that’s valuable to you. It means they’ve calculated the dollar value of keeping you alive versus letting you die.

“Improving patient outcomes” could mean catching diseases earlier, or it could mean denying expensive treatments so efficiently that you give up before you even start.

“Innovation” could mean life-saving technology, or it could mean algorithms that identify which patients won’t live long enough to make it through the appeals process.

This Is What AI Risk Actually Looks Like

We’ve been so focused on sci-fi scenarios about AI becoming conscious and turning against humanity that we’ve completely missed what’s happening right now.

AI is dangerous because we’re deploying it into systems that are already broken, controlled by entities with massive profit incentives and zero accountability, at a scale that makes individual harm invisible.

The healthcare system was already predatory. Now, while we argue like cavemen: “AI GOOD!” versus “AI BAD!”, these companies are profiting more and more without meaningful transparency or oversight, wrapped in language that makes it impossible for regular people to distinguish between innovation that helps them and automation that harms them.

And they’re betting on you not being able to tell the difference.

In 2023, 73 million in-network claims on ACA marketplace plans were denied—an average denial rate of 19%. And 82% of physicians report that patients abandon treatment due to authorization struggles.

These are people who needed care and couldn’t get it, didn’t know they could or lost hope fighting their insurance company while sick. People have died waiting for approval that should never have been required in the first place.

And no one bears personal responsibility when a denial leads to harm or death.

The system is designed so that blame diffuses across algorithms, automated processes, and corporate structures. There’s no one person to hold accountable when someone dies because their claim was denied in 1.2 seconds.

Sound familiar?

What You Can Actually Do

I can’t promise you that anything I suggest here will fix a fundamentally broken system. But I can promise that staying silent and compliant is exactly what they’re counting on.

USE AI AGAINST THEM & APPEAL EVERY DENIAL

The data is clear: Most denials get overturned. They’re banking on you being too exhausted, too sick, too overwhelmed to fight. Tools like these can help you fight back and understand what is happening with your health insurance—FOR FREE!

FightHealthInsurance.com – Free AI tool where you upload a denial letter and it drafts an appeal you can edit and send.

Counterforce Health – Nonprofit that generates detailed, customized appeal letters at no cost, using AI plus prior successful appeals and medical evidence.

Triage Health Appeals Navigator – Free AI‑based navigator that helps people with serious illness understand their rights and generate tailored appeal letters.

DOCUMENT EVERYTHING

Keep records of every denial, every appeal, every timeline. Not because you’re necessarily going to sue (though you might), but because patterns become visible when you write them down. Your individual denial might feel like bad luck or a one-off mistake. Your documented pattern of denials tells a different story.

TALK ABOUT IT

Not in a “raise awareness” way, but in a “this happened to me, did it happen to you?” way. Share your experiences with friends, family, in online communities. The insurance companies benefit enormously from everyone thinking their denial is an isolated incident. When we start connecting our individual experiences, the systematic nature of this becomes impossible to ignore.

It’s Up To Us—Again

If we keep waiting for someone else to fix it, for Congress to act, for regulators to intervene, for the companies to suddenly care, we’re just giving them more time to perfect the system that’s crushing us.

So appeal.

Document.

Talk about it.

Use their own AI tools against them.

Make them work for every denial.

Make the pattern visible.

Because they’re counting on your silence.

If you found this article helpful, please share it with a friend. To stay updated with two times monthly newsletters focused on AI’s impact on society, be sure to subscribe!

> AI is dangerous because we’re deploying it into systems that are already broken, controlled by entities with massive profit incentives and zero accountability, at a scale that makes individual harm invisible.

So basically, mirroring what we're doing for recruitment/hiring. In STEM fields, at least, the system was already problematic before – set up to reward performative ability over actual job-related skills – but AI is making it worse. In addition to résumé embellishment, cherry picking (literally every reference list), and numerous lies of omission, we now have applicants sending in AI-generated application materials and hiring managers using AI to filter them.

Hiring people is a nightmare. And it's probably worse for the people I'm hiring, who send one application after another into the void.

The 1.2 second review stat is just staggering. I work in tech and we'd never ship a product with that kind of quality bar, but somehow its acceptable when the stakes are peoples health. The scariest part is how the appeals process is designed to exhaust you rather than actually adjudicate claims fairly. I've had family members give up on treatments because the paperwork felt more draining than the illness itself.